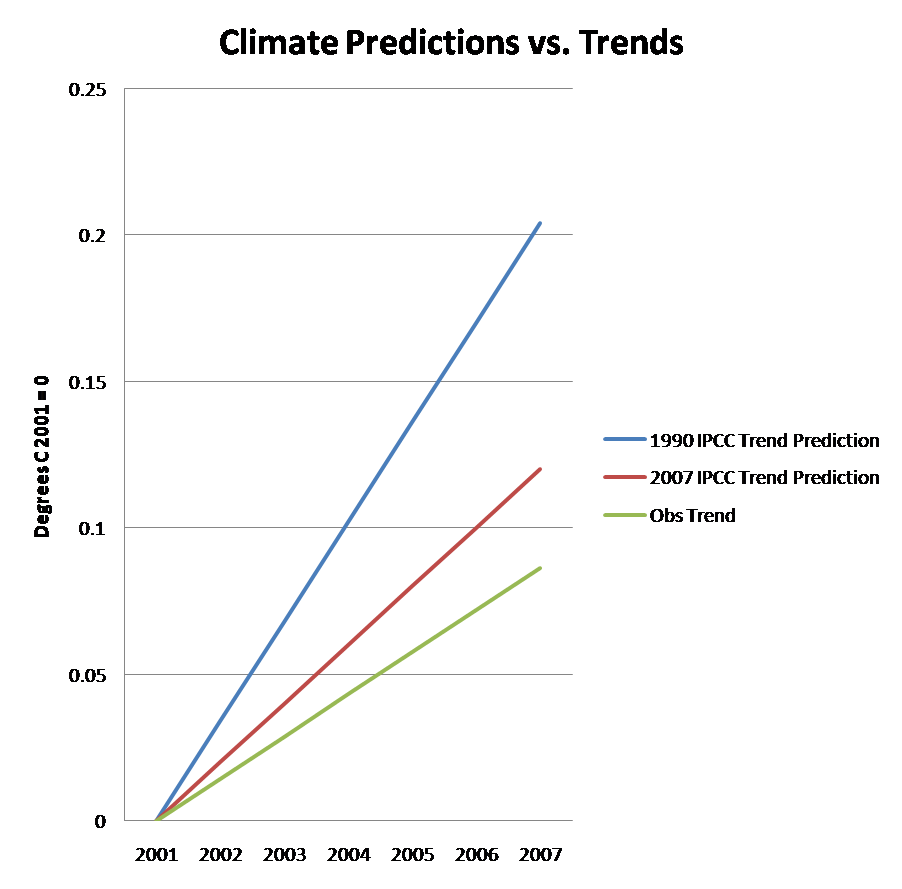

[UPDATE] Roger Pielke, Sr. tells us that we are barking up the wrong tree looking at surface temperatures anyway. He says that the real action is in looking at oceanic heat content, for which predictions have far less variability over short terms than do surface temperatures. And he says that observations of accumulated heat content over the past 4 years “are not even close” to the model predictions. For the details, please see for your self at his site.]

“Skill” is a technical term in the forecast verification literature that means the ability to beat a naïve baseline when making forecasts. If your forecasting methodology can’t beat some simple heuristic, then it will likely be of little use.

What are examples of such naïve baselines? In weather forecasting historical climatology is often used. So if the average temperature in Boulder for May 20 is 75 degrees, and my prediction is for 85 degree, then any observed temperature below 80 degrees will mean that my forecast had no skill. In the mutual fund industry stock indexes are examples of naive baselines used to evaluate performance of fund managers. Of course, no forecasting method can always show skill in every forecast, so the appropriate metric is the degree of skill present in your forecasts. Like many other aspects of forecast verification, skill is a matter of degree, and is not black or white.

Skill is preferred to “consistency” if only because the addition of bad forecasts to a forecasting ensemble does not improve skill unless it improves forecast accuracy, which is not the case with certain measures of “consistency,” as we have seen. Skill also provides a clear metric of success for forecasts, once a naïve baseline is agreed upon. As time goes on, forecasts such as those issued by the IPCC should tend toward increasing skill, as the gap between a naive forecast and a prediction grows. If a forecasting methodology shows no skill then it would be appropriate to question the usefulness and/or accuracy of the forecasting methodology.

In this post I use the IPCC forecasts of 1990, 2001, and 2007 to illustrate the concept of skill, and to explain why it is a much better metric that “consistency” to evaluate forecasts of the IPCC.

(more…)