A(nother) Problem with Scientific Assessments

June 23rd, 2006Posted by: Roger Pielke, Jr.

The American Geophysical Union released an assessment report last week titled “Hurricanes and the US Gulf Coast” which was the result of a “Conference of Experts” held in January, 2006. One aspect of the report illustrates why it is so important to have such assessments carefully balanced with participants holding a diversity of legitimate scientific perspectives. When such diversity is not present, it increases the risks of misleading or false science being presented as definitive or settled, which can be particularly problematic for an effort intended to be “a coordinated effort to integrate science into the decision-making processes.” In this particular case the AGU has given assessments a black eye. Here are the details:

The AGU Report includes the following bold claim:

There currently is insufficient skill in empirical predictions of the number and intensity of storms in the forthcoming hurricane season. Predictions by statistical methods that are widely distributed also show little skill, being more often wrong than right.

Such seasonal predictions are issued by a number of groups around the world, and are also an official product of the U.S. government’s Climate Prediction Center. If these groups were indeed publishing forecasts with no (or negative) skill, then there would be good reason to ask them to cease immediately and get back to research, lest they mislead the public and decision makers.

As it turns out the claim by the AGU is incorrect, or at a minimum, is a minority view among the relevant expert community. According to groups responsible for providing seasonal forecasts of hurricane activity, their products do indeed have skill. [Note: Skill refers to the relative improvement of a forecast over some naïve baseline. For example, if your actively managed mutual fund makes money this year, but does not perform better than an unmanaged index fund, then your fund’s manager has showed no skill – no added value beyond what could be done naively.] Consider the following:

1. Tropical Storm Risk, led by Mark Saunders finds that their (and other) forecasts of 2004 and 2005 demonstrated excellent skill according to a number of metrics:

Lea, A. S. and M. A. Saunders, How well forecast were the 2004 and 2005 Atlantic and U.S. hurricane Seasons? in Proceedings of the 27th Conference on Hurricanes and Tropical Meteorology, Monterey, USA, April 24-28 2006. (PDF)

For further details see this paper:

Saunders, M. A. and A. S. Lea, Seasonal prediction of hurricane activity reaching the coast of the United States, Nature, 434, 1005-1008, 2005. (PDF)

2. Phil Klotzbach of Colorado State University, now responsible for issuing the forecasts of the William Gray research team, provides a number of spreadsheets with data showing that their forecasts demonstrate skill:

Seasonal skill excel file

August monthly forecast skill excel file

September monthly forecast skill excel file

October monthly forecast skill excel file

Klotzbach writes in an email: “All three of our monthly forecasts have shown skill with respect to the previous five-year monthly mean of NTC using MSE (mean-squared error as our skill metric). Here are our skills (% value is the % improvement over the previous five-year mean):

August Monthly Forecast: 38%

September Monthly Forecast: 2%

October Monthly Forecast: 33%”

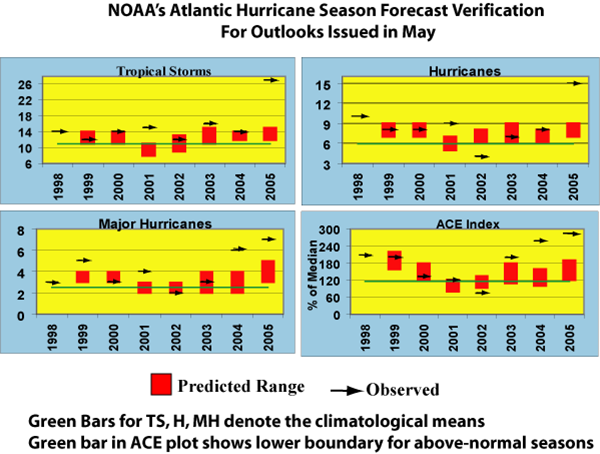

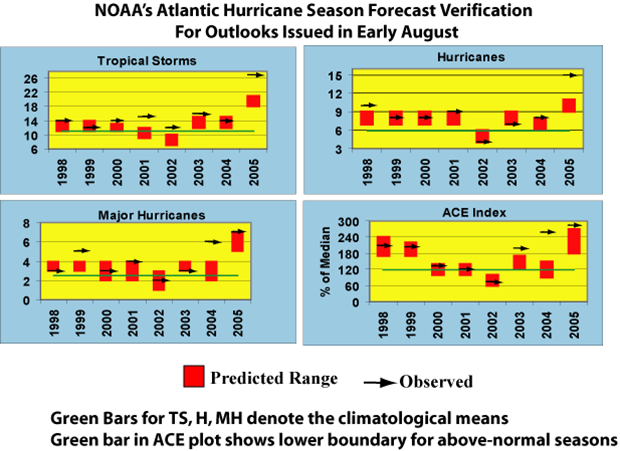

3. NOAA’s Chris Landsea provides the following two figures which show NOAA’s seasonal forecast performance.

He writes in an email: “You can see that we do okay in May (4 out of 7 seasons correctly forecasting number of hurricanes for example), but better in August (6 of 8 seasons correct).”

He also provides a link to this peer-reviewed paper:

Owens, B. F., and C. W. Landsea, 2003: Assessing the skill of operational Atlantic seasonal tropical cyclone forecasts, Weather and Forecasting, Vol. 18, No. 1, pp.45-54. (PDF)

From this information, it seems clear that there are strong claims in support of the skill of seasonal hurricane forecasts and relevant peer-reviewed literature. The AGU statement is therefore misleading and more likely just plain wrong. It certainly is not a community consensus perspective that one might expect to find in an assessment report.

What is going on here? Perhaps the AGU’s committee was unaware of this information, which if so would make one wonder about their “expert” committee. Given the distinguished people on their committee, I find this an unlikely explanation. Instead, it may be that the issue of seasonal hurricane forecasting has gotten caught up in the “climate wars.” William Gray is the originator of seasonal climate forecasts and has rudely dismissed the notion of human-caused global warming, much less a connection to hurricanes. One of the lead authors of the AGU assessment has been in a public feud with Bill Gray and is a strong advocate of a human role in recent hurricane activity. It is not unreasonable to think that the AGU assessment was being used as a vehicle to advance this battle under the guise of community “consensus.” It may be the perception among some that if Bill Gray’s or NOAA’s work on seasonal forecasts, which is based on various natural climatic factors, can be shown to be fundamentally flawed, then this would elevate the importance of alternative explanations.

If this hypothesis explaining what is going on is indeed the case, then it would be a serious misuse of the AGU for the advancement of personal views, unrepresentative of the actual community perspective. It would also represent a complete failure of the AGU’s assessment process. Given that there is peer-reviewed literature indicating the skill of seasonal forecasts, and none that I am aware of making the case for no skill, the AGU has given consensus assessments a black eye, and in the process provided incorrect or misleading information to decision makers.

The AGU case may be isolated, but it does beg the question raised by my father and others, how can we know whether scientific assessments faithfully represent the relevant community of experts versus a subset with an agenda posing under the guise of consensus? I am aware of no systematic approaches to answering this question. It is a question that needs discussion, because as political, personal, and other issues infuse the scientific enterprise, blind trust in disinterested science and science institutions no longer seems to be enough.

June 23rd, 2006 at 8:07 am

This comment from Phil K.:

“One suggestion, with regards to our skill, I think it would be better to post our seasonal skill as opposed to the skill of our monthly forecasts, since our monthly forecasts have only been issued for six, four and three years respectively for August, September and October. Our seasonal forecasts from June and August have been issued since 1984, so they probably are a better evaluation of our true skill. With regards to the same metric (mean-squared error with respect to the five-year previous mean), here is the skill of our seasonal forecasts:

>From 1 June:

Named Storms: 28%

Named Storm Days: 26%

Hurricanes: 23%

Hurricane Days: 37%

>From 1 August:

Named Storms: 63%

Named Storm Days: 38%

Hurricanes: 49%

Hurricane Days: 43%”

June 23rd, 2006 at 8:18 am

One indication of too much lab time is a lack of understanding of orders of magnitude in real world systems. Of course hurricanes are affected by an increase in temperature, and of course temperature is affected by an increase in atmospheric CO2. The question is *how much*. Is it sensible? Does it offset potential gains?

Just because “a” causes “b” doesn’t mean that all of “b” was caused by “a”. E.G. summer deaths from excessive heat are followed by a decrease in the death rate, indicating many of those who die of heat are on the verge anyway. Cold takes pretty much anybody, and there is not an equivalent decrease in the death rate after a cold snap.

June 23rd, 2006 at 2:29 pm

“insufficent” = “no (or negative)” !?

Puh-leese, Roger. You might also have observed that the report spent a lot of time on recommendations for improving skill. Of course.

“As it turns out the claim by the AGU is incorrect, or at a minimum, is a minority view among the relevant expert community.”

And this claim is based on…?

June 23rd, 2006 at 3:47 pm

Blind trust in disinterested science and science institutions was never enough.

June 23rd, 2006 at 4:28 pm

Steve B.-

Thanks for your comments. The following statement in the AGU report means negative skill: “Predictions by statistical methods that are widely distributed also show little skill, being more often wrong than right.”

I have provided links to 3 peer-reviewed papers that demostrate statistical skill in seasonal hurricane forecasting. There are none that I am aware of that support the AGU statement. The claim is a minority view in the relevant scientific community. Though if you ahve evidence, data, or information to the contrary please do provide it.

Thanks.

June 26th, 2006 at 2:38 am

It is really quite tragicomical to read this post alongside your post on the NAS-panel. Are you really this blind to yourself?

I don’t know how to take you seriously anymore.